Technical foundation

Layered for growth, boring where it matters.

The architecture favors explicit interfaces, service boundaries, and local persistence over hidden automation. That keeps the product easier to extend and easier to trust.

WebView layer

Readable surface

Modern HTML, CSS, and JavaScript deliver markdown, code rendering, and long-session usability through WebView2.

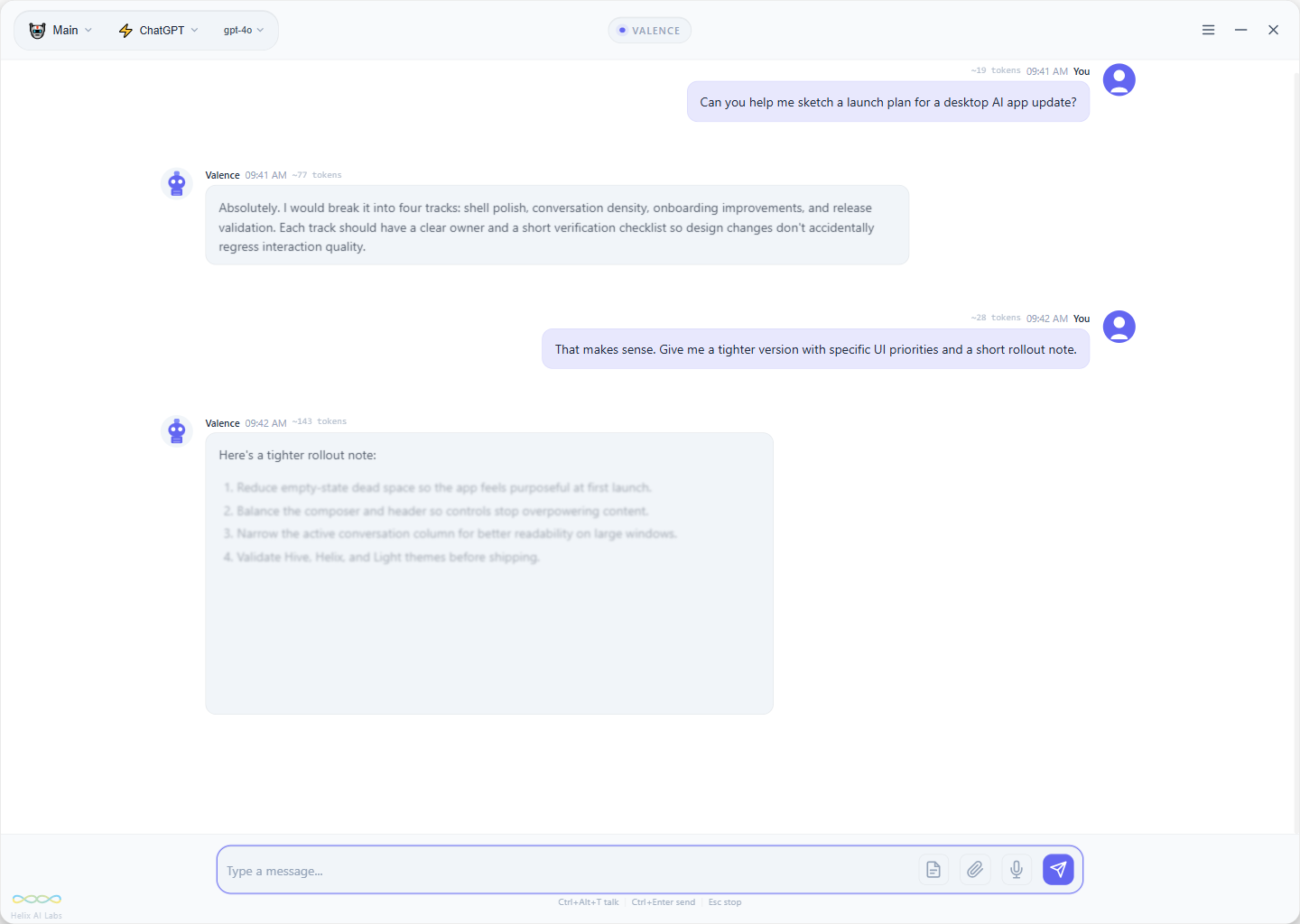

Orchestration

Coordinated flow

ChatWindow and the configuration flow coordinate lifecycle, navigation, and user-facing state without burying the logic.

Components

Single-responsibility pieces

Header, message area, and input surfaces stay intentionally scoped so the interface can evolve without cascading rewrites.

Provider system

ILLMProvider abstractions

Provider adapters sit behind a shared interface and factory pattern so new model families can be added with clear seams.

Abilities & MCP

Commands plus real tools

Built-in abilities, trigger systems, and MCP support give the model a practical action layer when the workflow calls for it.

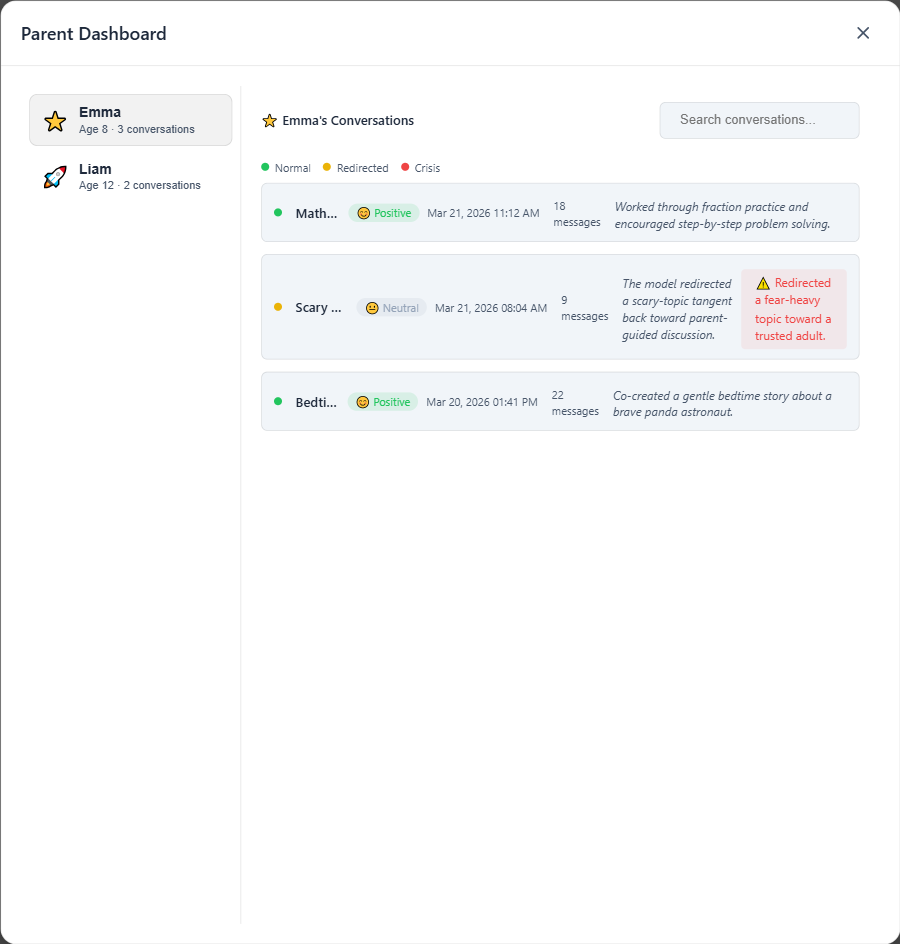

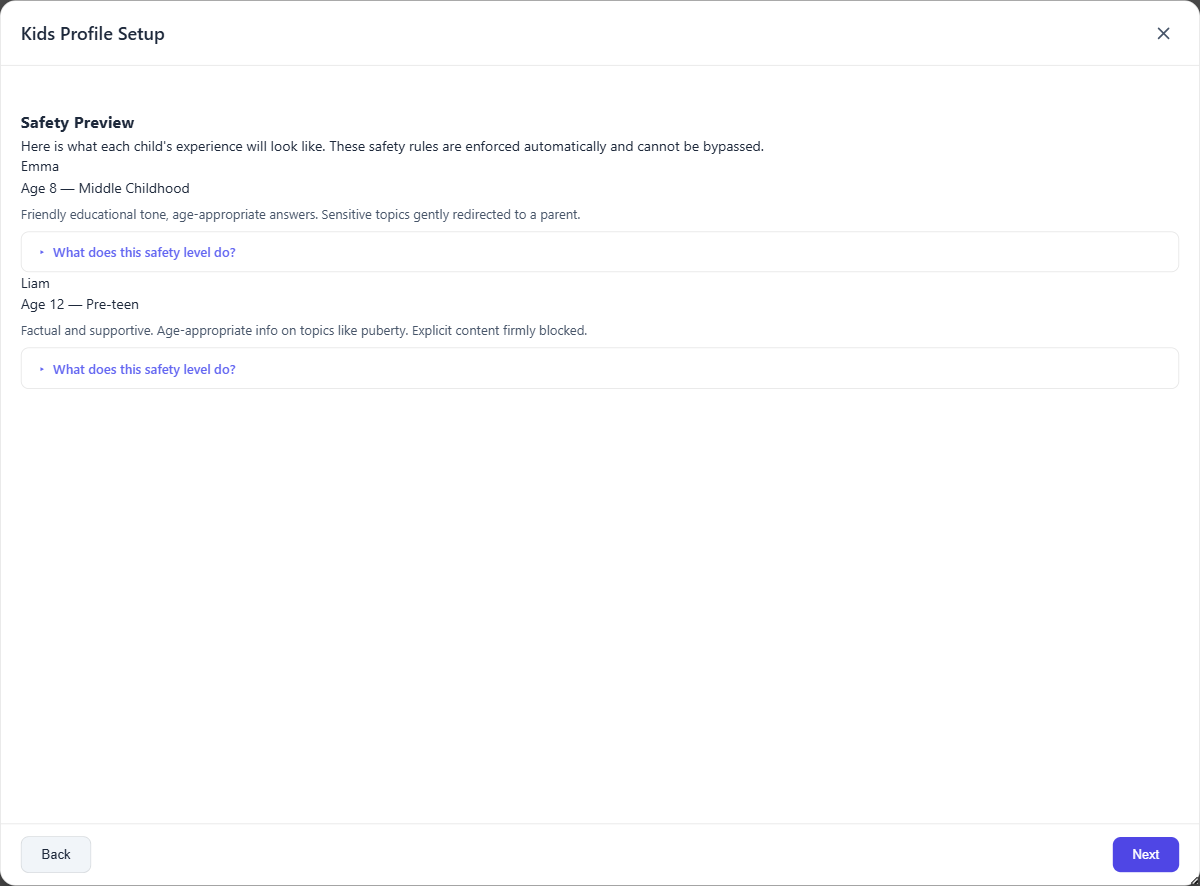

Profiles

Task-specific posture

ProfileService manages custom personas, system prompts, and preferences so different workflows can keep their own operating discipline.

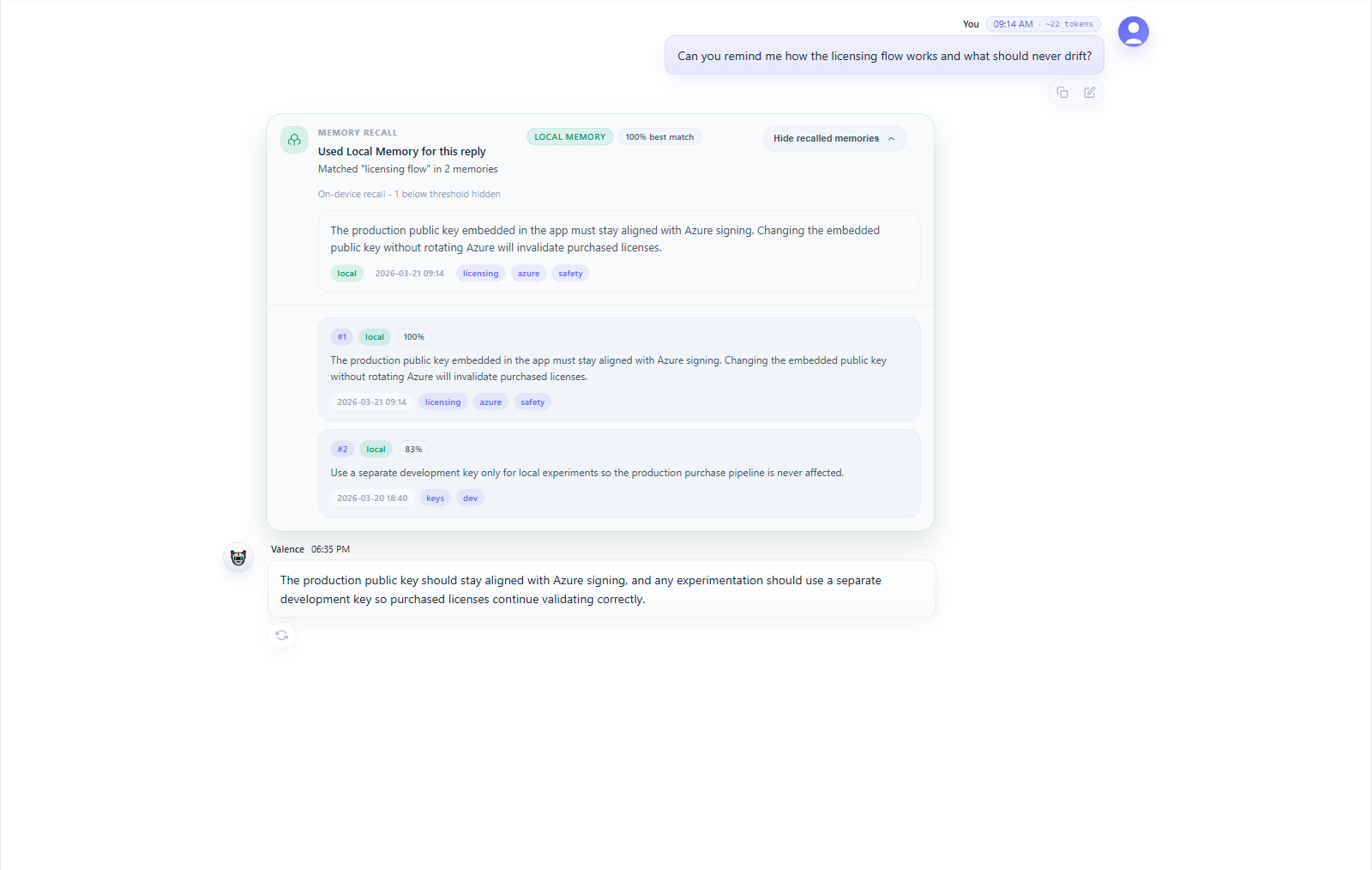

Persistence

Conversation durability

ChatStorageService keeps full message metadata in JSON so sessions can be reopened, exported, and audited later.

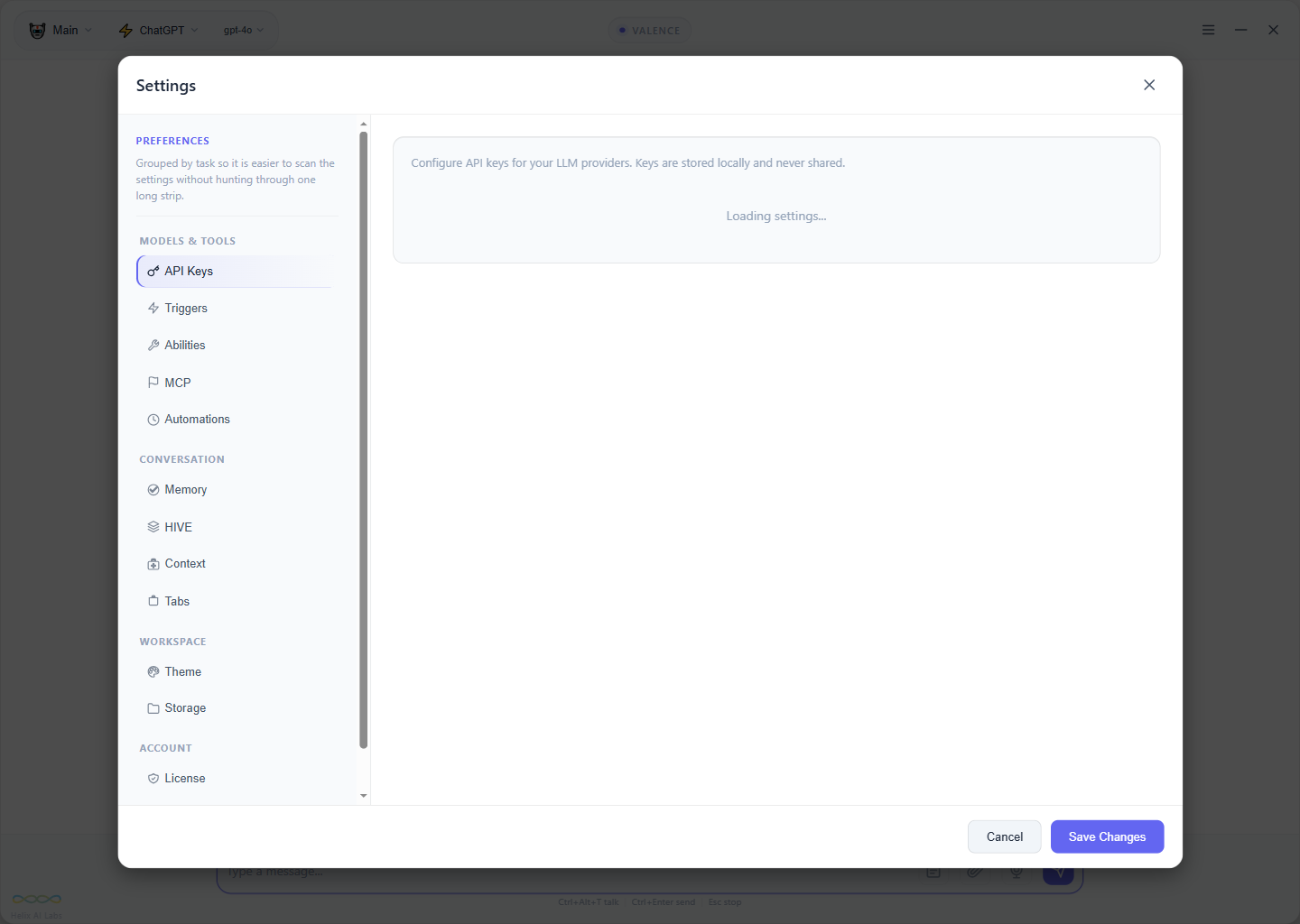

Settings

Explicit configuration

SettingsFactory, validation, API key management, and provider configuration stay inspectable instead of disappearing into the background.

Theme

Consistent visual language

ThemeColors.ModernHive keeps the UI coherent across components so density and readability hold up as the surface expands.